What is Data Lake Architecture?

In this multi-part series, we will take you through the architecture of a Data Lake. We can explore data lake architecture across three dimensions:

- Part I – Storage and Data Processing

- Introduction

- Physical Storage

- Data Processing ETL/ELT

- Part II – File Formats, Compression, and Security

- File Formats and Data Compression

- Design Security

- Part III – Data Catalog and Data Mining

- Data Catalog, Metadata, and Search

- Access and Mine the Lake

In this edition, we look at Storage and Data processing.

Data Lake Architecture

Data Lake architecture is all about storing large amounts of data which can be structured, semi-structured, or unstructured, e.g. web server logs, RDBMS data, NoSql data, social media, sensors, IoT data, and third-party data. A data lake can store the data in the same format as its source systems or transform it before storing it.

Why Use a Data Lake?

The main purpose of a data lake is to make organizational data from different sources, accessible to a variety of end-users like business analysts, data engineers, data scientists, product managers, executives, etc, in order to enable these personas to leverage insights in a cost-effective manner, for improved business performance. Today, many forms of advanced analytics are only possible on data lakes.

In order to create a data lake, we should take care of the data accuracy between the source and target schema. One example could be a record count match between source and destination systems.

Data Lake Physical Storage

The foundation of any data lake design and implementation is physical storage. The core storage layer is used for the primary data assets. Typically it contains raw and/or lightly processed data. The key considerations while evaluating technologies for cloud-based data lake storage are the following principles and requirements:

Data Lake Scalability

An enterprise data lake is often intended to store centralized data for an entire division or the company at large, hence it must be capable of significant scaling without running into fixed arbitrary capacity limits.

High durability

As a primary repository of critical enterprise data, the exceptionally high durability of the core storage layer allows for excellent data robustness without resorting to extreme high-availability designs.

Unstructured, semi-structured, and structured data

One of the primary design considerations of a data lake is the capability to store data of all types in a single repository. e.g. XML, Text, JSON, Binary, CSV etc.

Independence from fixed schema

Schema evolution is common in the big data age. The ability to apply schema on read, as needed for each consumption purpose, can only be accomplished if the underlying core storage layer does not dictate a fixed schema.

Separation from compute resources

The most significant philosophical and practical advantage of cloud-based data lakes as compared to “legacy” big data storage on Hadoop/HDFS, is the ability to decouple storage from compute and enabling independent scaling of each.

Complementary to existing data warehouses

Data warehouse and data lake can work in conjunction for a more integrated data strategy.

Cost-Effective

Open source has zero subscription costs, allowing your system to quickly scale as data grows. Handling cold/hot/warm data and data models, along with appropriate compression techniques, are useful to prevent cost growth exponentially.

Given the requirements, object-based stores have become the de facto choice for core data lake storage. AWS, Google, and Azure all offer object storage technologies. e.g. S3, Blob storage, ADLS, etc.

Data Lake Data Processing – ETL/ELT

Below are different types of data processing based on SLA:

Real-time

– Order of seconds refresh

Real-time processing requires continuous input, constant processing, and a steady output of data. Good examples of real-time processing are data streaming, radar systems, customer service systems, and bank ATMs, where immediate processing is crucial to make the system work properly.

Want real-time analytics? Model your storage right or bust. Watch this session by Matt Falk, Oribtal Insight

Near-Real time

– Order of minutes refresh

Near real-time processing is when speed is important, but processing time in minutes is acceptable in lieu of seconds. An example of near real-time processing is the production of operational intelligence, which is a combination of data processing and CEP (Complex Event Processing). CEP involves combining data from multiple sources in order to detect patterns and is useful to identify opportunities in the data sets (such as sales leads) as well as threats (detecting an intruder in the network).

Batch

– Hourly/Daily/Weekly/Monthly refresh

Batch processing is even less time-sensitive than near real-time. Batch processing involves three separate processes. Firstly, data is collected, usually over a period of time. Secondly, the data is processed using another separate program. Thirdly, the output is another set of data. Examples of data entered for analysis can include operational data, historical and archived data, data from social media, service data, etc. In general, MapReduce-based solutions are useful for batch processing and for analytics that are not real-time or near real-time. Examples of batch processing use cases include payroll and billing activities usually occurring on a monthly cycle, and deep analytics that are not essential for fast intelligence but necessary for immediate decision making.

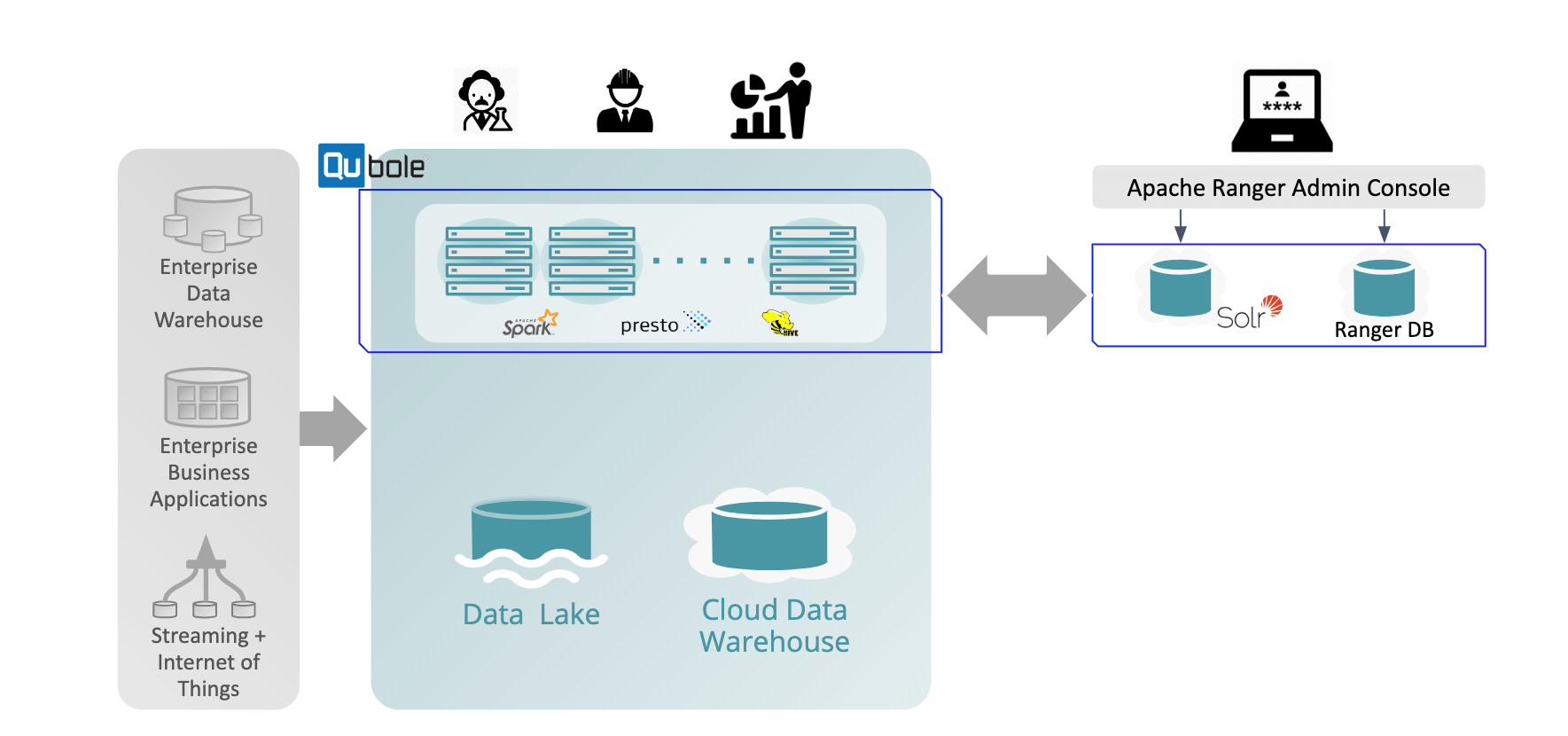

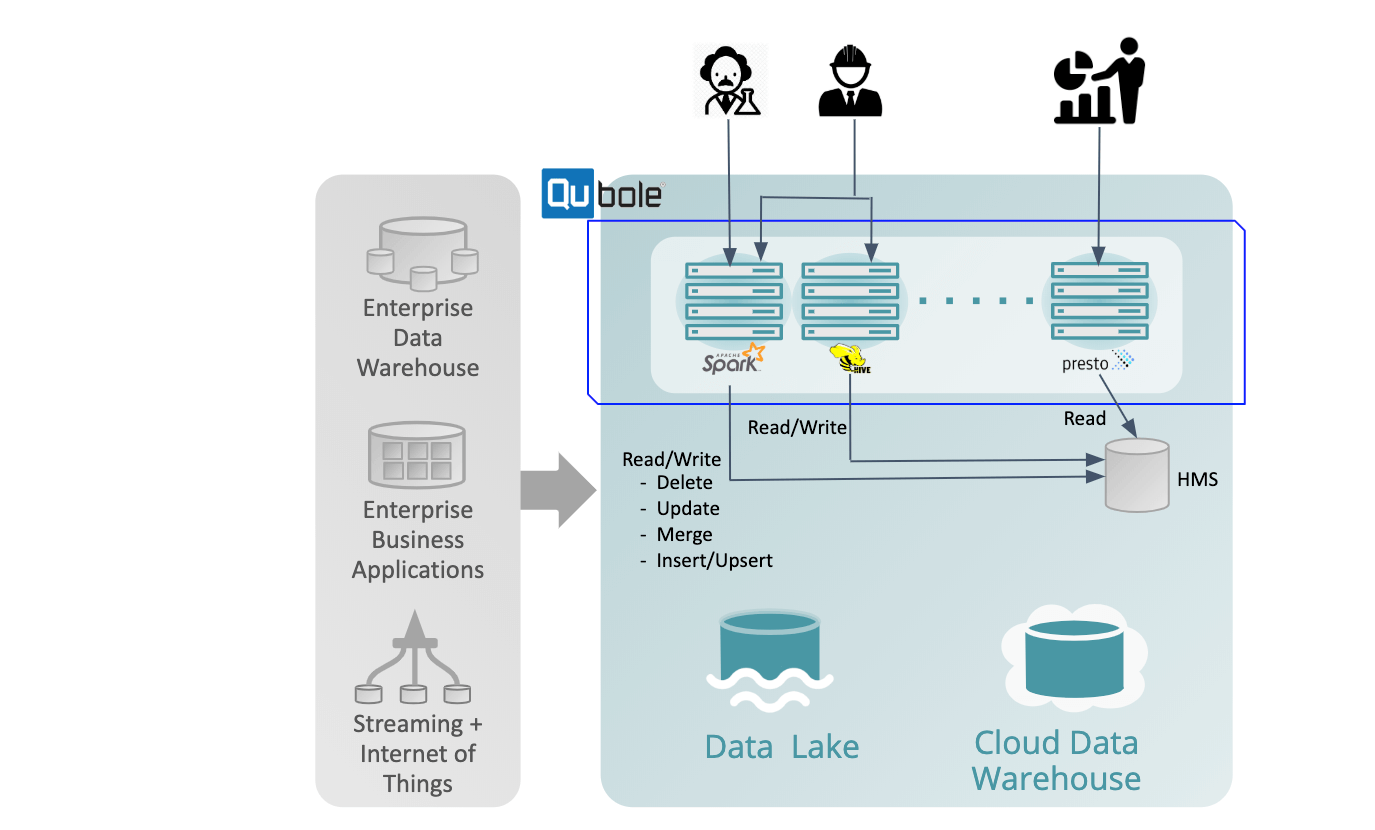

Accelerating your Data Lake deployment with Qubole –

- Multi-cloud offering – Helps you avoid cloud vendor lock-in by offering a native multi-cloud platform along with support for their corresponding native storage options: AWS S3 Object Store, Azure data lake and Blob, Google Cloud Storage

- Unified data environment – with connectivity to traditional Data Warehouses and NoSQL databases (on-premise or in the cloud)

- Intelligent and automatic response – to both storage and compute needs of bursty and unpredictable big data workloads. It evaluates the current load on the cluster in terms of compute power and intelligently predicts how many additional workers are needed to complete the workload within a reasonable time. Qubole automatically upscales the storage capacity of individual nodes that are nearing full capacity.

- Support for various mechanisms – for Encrypting data at rest in coordination with your choice of the cloud vendor.

- Multiple distributed big data engines – Spark, Hive, Presto, and common frameworks like Airflow. Multiple engines allow data teams to solve a wide variety of big data challenges using a single unified data platform.

- User-friendly UI for data exploration (Workbench) – as well as a built-in scheduler for sequential workflows to accelerate time to value.

- Support for Python/Java SDKs and REST APIs – allows easy integration to your applications.

- Ingestion and processing from real-time streaming data sources – allowing your data teams to address use cases that call for immediate action and analysis.

Integration with popular ETL platforms – such as Talend, and Informatica accelerates adoption by traditional data teams. - Multiple facilities for data Import/Export – using different tools and connectors, data teams can import the data into Qubole, run various analyses and then export the result to your favorite visualization engine.

Continue Reading: Data Lake Essentials, part 2 – file formats, compression, and security

Additional references

- https://www.qubole.com/blog/optimizing-s3-bulk-listings-for-performant-hive-queries/

- https://www.qubole.com/blog/auto-scaling-in-qubole-with-aws-elastic-block-storage/

- https://www.qubole.com/blog/recover-partitions-performance-spark-on-qubole/

- https://www.qubole.com/product/architecture/common-user-interface/

- https://docs.qubole.com/en/latest/user-guide/data-engineering/quest/quest-intro.html

- https://docs.qubole.com/en/latest/rest-api/

- https://github.com/qubole/qds-sdk-py

- https://docs.qubole.com/en/latest/connectivity-options/partner-integration/talend_integration_guides/talend-integration/index.html

- https://marketplace.informatica.com/listings/on-premise/data-integration/qubole.html

- https://www.qubole.com/resources/qubole-bulk-import-export-data-rdbmses/