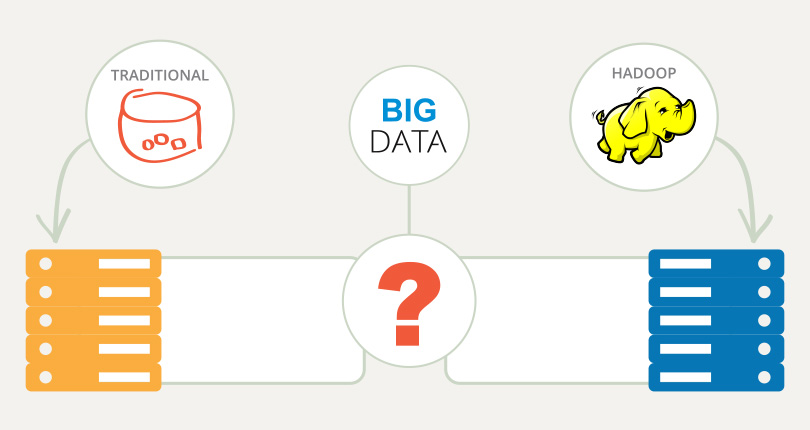

Today’s ultra-connected world is generating massive volumes of data at ever-accelerating rates. As a result, big data analytics has become a powerful tool for businesses looking to leverage mountains of valuable data for profit and competitive advantage. In the midst of this big data rush, Hadoop, as an on-premise or cloud-based platform has been heavily promoted as the one-size-fits-all solution for the business world’s big data problems. While analyzing big data using Hadoop has lived up to much of the hype, there are certain situations where running workloads on a traditional database may be the better solution.

For companies conducting a big data platform comparison to find out which functionality will better serve their big data use case needs, here are some key questions that need to be asked when choosing between Hadoop databases – including cloud-based Hadoop services such as Qubole – and a traditional database.

Is Hadoop a Database?

Hadoop is not a database, but rather an open-source software framework specifically built to handle large volumes of structured and semi-structured data.

Organizations considering using Hadoop for big data analysis should evaluate whether their current or future data needs require the type of capabilities Hadoop offers.

Structured vs Unstructured Data

Structured Data

Data that resides within the fixed confines of a record or file is known as structured data. Owing to the fact that structured data – even in large volumes – can be entered, stored, queried, and analyzed in a simple and straightforward manner, this type of data is best served by a traditional database.

Unstructured Data

Data that comes from a variety of sources, such as emails, text documents, videos, photos, audio files, and social media posts, is referred to as unstructured data. Being both complex and voluminous, unstructured data cannot be handled or efficiently queried by a traditional database. Hadoop’s ability to join, aggregate, and analyze vast stores of multi-source data without having to structure it first allows organizations to gain deeper insights quickly. Thus Hadoop is a perfect fit for companies looking to store, manage, and analyze large volumes of unstructured data.

When to Use Hadoop vs RDBMS

Companies whose data workloads are constant and predictable will be better served by a traditional database.

Companies challenged by increasing data demands will want to take advantage of Hadoop’s scalable infrastructure. Scalability allows servers to be added on demand to accommodate growing workloads. As a cloud-based Hadoop service, Qubole offers more flexible scalability by spinning virtual servers up or down within minutes to better accommodate fluctuating workloads.

Is Hadoop cost-effective?

Cost-effectiveness is always a concern for companies looking to adopt new technologies. When considering a Hadoop implementation, companies need to do their homework to make sure that the realized benefits of a Hadoop deployment outweigh the costs. Otherwise, it would be best to stick with a traditional database to meet data storage and analytics needs.

All things considered, big data using Hadoop has a number of things going for it that make implementation more cost-effective than companies may realize. For one thing, Hadoop saves money by combining open-source software with commodity servers. Cloud-based Hadoop platforms such as Qubole reduce costs further by eliminating the expense of physical servers and warehouse space.

Hybrid systems, which integrate Hadoop platforms with traditional relational databases, are gaining popularity as cost-effective ways for companies to leverage the benefits of both platforms.

Is fast Data Analysis critical?

Hadoop was designed for large distributed data processing that addresses every file in the database. And that type of processing takes time. For tasks where fast performance isn’t critical, such as running end-of-day reports to review daily transactions, scanning historical data, and performing analytics where a slower time-to-insight is acceptable, Hadoop is ideal.

On the other hand, in cases where organizations rely on time-sensitive data analysis, a traditional database is the better fit. That’s because shorter time-to-insight isn’t about analyzing large unstructured datasets, which Hadoop does so well. It’s about analyzing smaller data sets in real or near-real-time, which is what traditional databases are well equipped to do.

Hybrid systems are also a good fit to consider, as they allow companies to use traditional databases to run small, highly interactive workloads while using Hadoop to process huge, complex data sets.

Which Big Data Platform is Best?

That all depends. While the benefits of big data analytics in providing deeper insights that lead to competitive advantage are real, those benefits can only be realized by companies that exercise due diligence in making sure that using Hadoop for big data analysis best serves their needs. Let us know if we can help in your big data platform comparison.